Size-frequency distribution of geologic features

The size-frequency distribution of geologic phenomena is closely related to scale invariance. In particular, we recognize that small objects are more common than large objects. What is less intuitively obvious is that there is frequently a very specific relationship between the number of small objects and the number of large objects.

The number of objects of a certain size tends to fall within a

log-normal distribution. If you were to graph the count of, say, faults greater than a certain size on one axis against size on the other, and if this graph were a

log-log graph, the plot would be a straight line.

Furthermore, the

slope of that line could be used to characterize the population of the phenomena in question, being described sometimes as a measure of the

fractal dimension of the system.

It is easy to imagine applying this to a real scenario.

The above is a photo of a channelized debris flow in the Yakataga Formation exposed on Middleton Island, in the Gulf of Alaska. A close look at the make up of the debris flow would reveal sediments in a continous gradation from cobble-sized fragments down to clay. If you counted the particles, you would expect to find a great many more clay-sized fragments than cobble-sized fragments.

Flicker noise (also known as 1/f distribution) is held to be the dominant scaling law for geologic phenomena and is a marker of scale invariance. It is observed in borehole logs (Bean, 1999), fractures (Walsh et al., 1991), ocean temperature distributions through time (Fraedrich et al., 2004), avalanches, forest fires, earthquakes, extinctions, and many other phenomena

(Turcotte, 1999).

Sediment grain-size distributions

For several decades there has been an argument about the nature of sediment grain-size distributions in real deposits. One school of thought (let's call them the statistical school) held that sediment grain sizes should be

log-normally distributed, and could therefore be expressed in terms of a mean and a standard deviation agains a logarithmic size scale (below right).

Log-normal data distribution.

As outlined in Limpert et al. (2001), there are many natural processes which are believed to yield log-normal size distributions. My opinion is that the normal (or log-normal) concept is overdone because of the nature of introductory statistical education. For many who have had a rudimentary introduction to statistics, all distributions are normal (or log normal),

possibly with some modification to the tails.

The alternative approach (following Bagnold, 1941) held that there are two competing processes responsible for sediment deposition--the transport to the area of interest and the transport of material away from the area of interest. Each of these processes could have its own relationship between probability and grain-size, so the size-distribution of the particles actually deposited could be asymmetric.

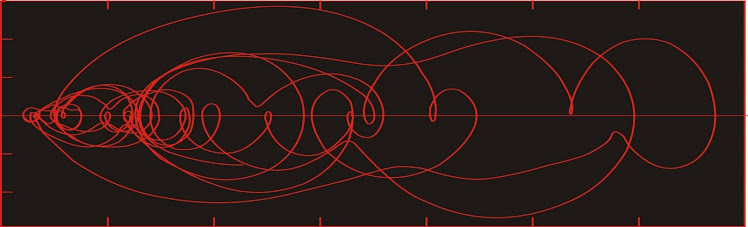

The log-hyperbolic distribution is named because the distribution

has the form of an hyperbola when plotted on a log-log graph.

The distribution is described by four parameters. Phi and gamma

describe the slopes of the two asymptotes, which reflect the likelihood

that any particle will be carried to the area of interest (gamma) and the

likelihood that any particle will be removed from the area of interest (phi).

The other two parameters (delta and pi) are reflections of how closely

each limb of the hyperbola follows its asymptote.

As an aside, returns on investment portfolios are

usually described by normal distributions. However, it is frequently recognized that the

tails of the distribution are "fatter" than should be the case (meaning that extremely large annual gains and losses are more common than expected). Possibly these distributions should be hyperbolic instead of normal. Indeed, Nassim Taleb's (2007) black swan events may fall into this category.

The log-normal distribution traces a parabola in a log-log plot. It is described by

two parameters: mu (the mean) and sigma (the standard deviation). The weights of

the tails in the log-hyperbolic distribution will be greater than those of the log-normal

distribution, to a typical investor's regret.

At the present time it would appear that those that believe grain-size distributions are log-hyperbolic hold the upper hand. The support that exists for log-normal descriptions stems from the fact that they are more easily described.

Time distribution and

self-organized criticality

Bak et al. (1987) studied a model for the growth of a sandpile by dropping individual grains of sand over a two-dimensional grid. Under a prescribed circumstance, the addition of a grain of sand would cause an avalanche, whereby one grain would cascade one gridpoint to the west (say) and another to the north (say).

This was an improvement over the typical 2-dimensional model where the grains of sand were dropped along a line. In the 2-d model, the result is very simple--the pile of sand grows until the slope everywhere reaches the angle of repose, after which every subsequent grain of sand cascades down the side and off the pile. Not very interesting.

Bak et al. (1987) considered that the 3-d sandpile might behave in an analogous fashion to the 2-d model. The sandpile would grow until the slopes reached a critical threshold, after which a single grain of sand would cause a massive avalanche. The trouble with this notion is that it did not seem logical--that a system would spontaneously evolve to a point of maximum instability.

In carrying out the experiment, they discovered that instead of having a long period of no avalanches followed by a massive avalanche; there was a actually a continuous stream of avalanches of varying sizes. The size distribution of the avalanches had a 1/f distribution in time as well as in space.

The 1/f distribution of events through time had been noted previously, but not as a general phenomenon. The Gutenberg-Richter law describing the size distribution of earthquakes is an example of 1/f noise, but this law was never treated as anything but an empirical law applicable only to earthquakes.

One significant application for flicker noise in geological phenomena is in the field of risk management. Written history over much of North America is only a few hundred years, which is not nearly enough to establish the pattern for earthquakes with a

recurrence interval of, say, a thousand years. And yet knowing the size of the thousand-year earthquake may be significant.

If you are building a nuclear power plant with an expected lifespan of fifty years, then there is a 1 in 20 chance that a thousand-year earthquake will strike during its operational life. It seems prudent to design the reactor to withstand this earthquake. We establish the size of this earthquake by charting the size-frequency distribution of the earthquakes we do observe, which will have mostly been small. We can extrapolate our line of best-fit to the thousand-year recurrence-interval point on the graph and calculate with reasonable confidence the moment magnitude of the earthquake with a thousand-year recurrence interval, which will be somewhat larger than any of the earthquakes we have observed to date.

The concept of self-organized criticality has been applied to economic systems (notably stock market crashes by

Sornette, 2003).

References

Bagnold, R. A., 1941.

The physics of blown sand. Methuen, London.

Bak, P., Tang, C., and Wiesenfield, K., 1987. Self-organized criticality: An explanation of 1/f noise.

Physical Review Letters, 59: 381-384.

Bean, C. J., 1996. On the cause of 1/f-power spectral scaling in borehole sonic logs.

Geophysical Research Letters, 23: 3119-3122.

Limpert, E., Stahel, W. A., and Abbt, M., 2001. Log-normal distributions across the sciences: keys and cues.

Bioscience, 5: 341-352.

Sornette, D., 2003.

Why stock markets crash: critical events in complex financial systems. Princeton University Press, Princeton.

Taleb, N. N., 2007. The Black Swan: The impact of the highly improbable. Random House, New York.

Turcotte, D. L., 1999. Self-organized criticality.

Reports on Progress in Physics, 62: 1377-1429.

Walsh, J., Watterson, J., and Yielding, G., 1991. The importance of small-scale faulting in regional extension.

Nature, 351: 391-393.