Once again the good folks at Natural Resource Holdings (this time teamed up with Visual Capitalist) have updated their report (pdf) listing gold deposits greater than one million ounces in size.

In earlier postings I discussed briefly the expectations for the size-distribution of gold deposits, using an earlier list published by NRH and historical Nevada as examples. My conclusion was that the size-distribution of gold deposits follows a scaling law over at least a couple of orders of magnitude. There is a maximum size for gold deposits, because hydrothermal cells can likely only be so large before they become unstable and divide into smaller cells, leading to gold of one (natural deposit) being scattered over several discrete (economic) deposits. So how do we count them?

There are minimum sizes for deposits as well, primarily for economic reasons. So our scaling law only seems to be valid over a pretty limited range.

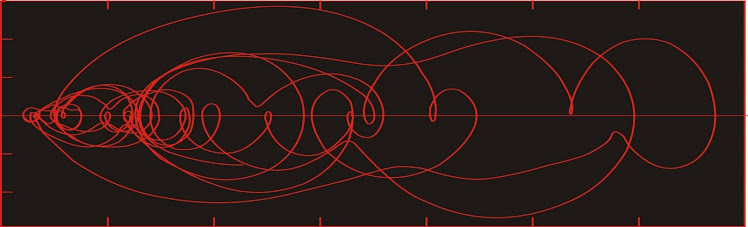

The yellow line is a possible scaling law to describe the size-distribution of gold deposits. Interestingly, its slope is 1 (pink noise), a very common scaling law in physical systems. It is quite different from the slope of 1.5 obtained from Nevada deposits. I'm not sure how to explain this, except that the Nevada deposits are almost exclusively of one type, whereas the global deposits represent all known settings.

As before, I don't expect that we will find many more huge (> 35 M oz) deposits; but there is potential to fill in the gap below the line in the 1 M oz range. From the above graph, we would still expect to find at least 400 more deposits, mostly in the 1 - 3 M oz range.

In reality, the number will likely be higher, as the census would still not be complete. It would be foolish to assume there are no deposits in Antarctica, for instance, even if climate and politics makes their exploitation unlikely. There are also numerous deposits on the seafloor, even if it may be a long time before control systems reach the point where they can correctly distinguish between ore and waste material while more than a km underwater.

All of this suggests that the yellow line needs to be shifted upwards--which opens up the possibility of many more deposits in the >10 M oz category still to be found. No guarantee on costs of all these, sorry.

---

Almost forgot to h/t Otto - although I'm sure I would have noticed this eventually.

In earlier postings I discussed briefly the expectations for the size-distribution of gold deposits, using an earlier list published by NRH and historical Nevada as examples. My conclusion was that the size-distribution of gold deposits follows a scaling law over at least a couple of orders of magnitude. There is a maximum size for gold deposits, because hydrothermal cells can likely only be so large before they become unstable and divide into smaller cells, leading to gold of one (natural deposit) being scattered over several discrete (economic) deposits. So how do we count them?

There are minimum sizes for deposits as well, primarily for economic reasons. So our scaling law only seems to be valid over a pretty limited range.

The yellow line is a possible scaling law to describe the size-distribution of gold deposits. Interestingly, its slope is 1 (pink noise), a very common scaling law in physical systems. It is quite different from the slope of 1.5 obtained from Nevada deposits. I'm not sure how to explain this, except that the Nevada deposits are almost exclusively of one type, whereas the global deposits represent all known settings.

As before, I don't expect that we will find many more huge (> 35 M oz) deposits; but there is potential to fill in the gap below the line in the 1 M oz range. From the above graph, we would still expect to find at least 400 more deposits, mostly in the 1 - 3 M oz range.

In reality, the number will likely be higher, as the census would still not be complete. It would be foolish to assume there are no deposits in Antarctica, for instance, even if climate and politics makes their exploitation unlikely. There are also numerous deposits on the seafloor, even if it may be a long time before control systems reach the point where they can correctly distinguish between ore and waste material while more than a km underwater.

All of this suggests that the yellow line needs to be shifted upwards--which opens up the possibility of many more deposits in the >10 M oz category still to be found. No guarantee on costs of all these, sorry.

---

Almost forgot to h/t Otto - although I'm sure I would have noticed this eventually.